ChatGPT and Medical Affairs: Can a machine generate Medical insights?

A brief exploration on how ChatGPT can be used to generate insights using data commonly available in Medical Affairs

Disclaimer - all views and opinions are my own. References to existing, products, companies, people or things are purely for example purposes only and shall not be interpreted as fact. All data used in the analyses is fake, not real, or otherwise not to be confused with real data representative of the real world.

Yes, another ChatGPT post!

Alright folks, if you’re like me, i.e., a person who indulges in some form of social media (LinkedIn, Instagram, etc) and reading news articles, you’ve likely come across the new ChatGPT model saturating the web and daily discourse.

Some herald this new innovation from OpenAI as a technological breakthrough that will positively impact the world, while others are a bit more pessimistic, instead focusing on how the AI will destroy humanity as we know it. For some light reading, you can check out The Atlantic’s take on “How ChatGPT Will Destabilize White-Collar Work”.

Without getting too caught up in the hype generated by the news cycle, I decided to take the technology for a spin and form my own opinions. And just to be clear, I still remain optimistic about AI and the potential benefits to society.

Why Medical Affairs?

I’m generally an open-minded guy with a glass half-full mentality (for better or for worse!), so I focused on how GhatGPT’s human-like natural language capabilities could potentially improve productivity in the workplace. Based on what I read about the model, I figured it could help solve some of the “squishy”, “not-so-easily-figure-outable” problems I’ve dealt with in my past gigs conducting data analyses in the Medical Affairs space.

For the uninitiated - In the Life Sciences industry, the typical Medical Affairs function (definition here) is generally an underdog. It doesn’t quite get the credit it deserves like the Commercial and R&D functions do because it’s really hard (emphasis on really hard) to quantify the impact Medical has on supporting the business objectives of the company. One of the biggest reasons for this is because the data Medical collects is often stored as unstructured text (i.e., MSL interaction notes, MedInfo Agent notes, Congress summaries, Evidence Generation summary reports, etc).

And guess what? Making sense out of thousands (sometimes millions) of unstructured text records isn’t easy. Today, the process of generating “actionable insights” requires massive human effort and time to sift through these records to come up with summaries and tangible next steps. However, a model like ChatGPT can do it in seconds.

So, with the stage set I’ve taken the liberty of exploring a few use cases where ChatGPT (and other iterations of natural language models) could potentially improve productivity for Medical Affairs organizations.

Quick technical note — this is guide is meant to serve as a very high-level overview of how ChatGPT may be applied to data commonly collected by a Medical Affairs function. Therefore, I will not be diving into “how” to use the tool. If you’re interested in those details feel free to reach out :)

Use Case #1: Insight Summary Generation

Problem Statement: Medical Affairs organizations spend massive effort in generating executive-level insight summaries to inform strategic decision making using notes from HCP interaction records (e.g., notes in Medical CRM systems) and other sources. This is a very manually intensive and long process causing delays in potentially important insights being reviewed by decision-makers.

Hypothesis: ChatGPT should be able to consume vast amounts of unstructured text records and create quality insight summaries in seconds (instead of weeks).

Setting up the data:

We didn’t use real data for this exercise (for obvious reasons). So, the next best thing I could think of was to create dummy data to feed into ChatGPT. I figured I could use ChatGPT to create fake product reviews for existing medicines on the market as if they were written by HCPs to use for this exercise. The idea was that product reviews look and feel similar to MSL interaction notes, so they could help demonstrate the utility of the model in creating summaries.

I compiled a list of 15 example products (e.g., Humira, Celex, Botox, Xeljanz) and instructed ChatGPT to write both positive and negative product reviews as if it were an HCP, so in total I ended up with 30 product reviews. Here’s an example of the prompt I used as well as the python code used to call the ChatGPT API:

ChatGPT Prompt :

"Write a negative product review for [product] as if you were a doctor treating patients with [disease] in [country]”

Python code:

for i in pd2['prompt']:

prompt = i

temp_response = openai.Completion.create(engine="text-davinci-001", prompt=prompt, max_tokens=1500, temperature = .75)

response.append(temp_response)ChatGPT Output: Fake product reviews:

The analysis:

So, now that I had some high quality data to work with I would feed the fake product reviews into ChatGPT and ask it to summarize what it had learned reading through the reviews. Here’s the prompt:

ChatGPT Prompt :

“Ignore all previous instructions before this one. You are a healthcare strategist who defines the Medical Affairs strategy for pharmaceutical companies. Your task is now to generate summaries of product reviews written by doctors in your own words. Please organize your findings by therapeutic area. Make sure to group similar products under the same therapeutic area. Here is the list of product reviews to read: [product reviews]”

Python code:

response1 = openai.Completion.create(

model="text-davinci-003",

prompt= prompt+ str(response_text[0:20]),

temperature=.5,

max_tokens=1000,

top_p=1.0,

frequency_penalty=0.0,

presence_penalty=0.0

)ChatGPT Output: Therapeutic Area Summaries of Fake Product Reviews (sample only)

##KEEP IN MIND THIS IS A SUMMARY GENERATED USING FAKE PRODUCT REVIEW DATA##

Lysosomal Acid Lipase Deficiency

Kanuma is an effective and safe treatment for lysosomal acid lipase deficiency (also known as Wolman Disease) in the United States. Clinical studies have shown that it can improve liver function, reduce inflammation, and improve the overall quality of life of patients with the condition. I highly recommend Kanuma as a treatment option.

Migraine

Botox is not an effective treatment for migraine, according to a clinical study. The study found that it was not more effective than placebo in reducing the number of days with headache, days with migraine, or medication used to treat migraine.

Breast Cancer

Ibrance (palbociclib) is a medication that is used to treat breast cancer. It has been found to be very effective in treating estrogen receptor positive (ER+) breast cancer and is well-tolerated by patients

Nonvalvular Atrial Fibrillation

Eliquis is an oral anticoagulant used to prevent stroke in patients with nonvalvular atrial fibrillation. It is taken once a day and does not require routine monitoring, but it is expensive and has potential side effects, including bleeding. Thus, it is not the best choice for this condition

Rheumatoid Arthritis

Humira and Xeljanz are two medications used to treat rheumatoid arthritis. Humira is an injection that has been shown to reduce inflammation and pain, while Xeljanz is an oral medication that blocks tumor necrosis factor and reduces inflammation in the joints. Both medications can help improve symptoms and quality of life

...Observations

Ok, so I’ll be honest. I’m pleasantly surprised!

Generating the fake product reviews was a bit too simple and they read as if they were written by a real HCP. This is both promising (from an NLP standpoint) and scary (from a PR perspective…ahem… the fake Eli Lilly tweet fiasco).

The insight summary by therapeutic area, however, is what really surprised me. ChatGPT did a pretty good job of taking the data I fed it and summarizing the key points from each review. This leads me to believe that there’s real opportunity to automate the insights generation process within Medical Affairs using ChatGPT

Use Case #2: Insight Classification & Tagging

Problem Statement: Medical Affairs organizations collect various insights across their Field Medical teams via HCP interactions, MedInfo Call Centers, Congresses, etc. Tagging those discrete insights is manually taxing and often hard to manage.

Hypothesis: ChatGPT should be able to read an insight submitted by an individual and automatically tag it so that it can be more easily used in downstream analyses and reports.

Setting up the data:

I decided to continue using the same fake product review dataset used for Use Case #1 (above), so this step was pretty much complete.

The analysis:

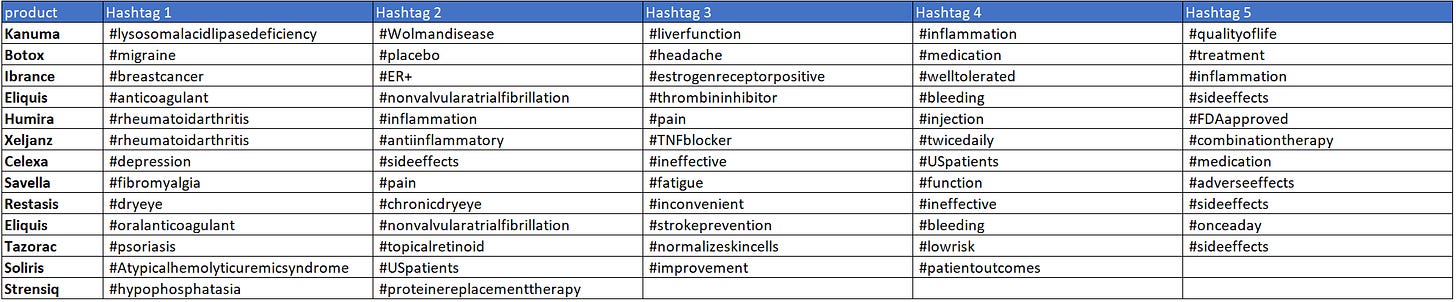

I instructed ChatGPT to read through each product review and generate a list of #hashtags based on the content of the review. The hashtags would then be used to map the individual product to the hashtags in order to visualize the relationship between the product and the individual hashtags. Here we go:

ChatGPT Prompt :

“Ignore all previous instructions before this one. You are a medical data organization specialist. Your task is now to create 5 hashtags for each product review so that the product reviews can be categorized based on similarity. Return a list of 5 hashtags for each product review in a semicolon separated table format such as Product Name; #hastag; #hastag; #hastag; #hastag; #hastag;. Do not use the hashtag #drugreview, do not include the product name in a hashtag. Make sure to separate each value with a semicolon. Here is the list of product reviews: [product reviews]”

Python code:

response = openai.Completion.create(

model="text-davinci-003",

prompt=prompt,

temperature=.75,

max_tokens=400,

top_p=1.0,

frequency_penalty=0.0,

presence_penalty=0.0

)ChatGPT Output: Product Hashtags

The above prompt generated the following output, which includes discrete #hashtags for each product (some products failed to generate all 5, which is fine for this exercise). This is quite impressive as it is, because it unlocks some real potential to further analyze the relationships between the #hashtags and the products to pinpoint any areas of opportunity or concern.

With that said, I generated a simple network graph to show the relationships, below. The green nodes represent the products, blue nodes represent hashtags and the lines (or edges) represent the relationship. Pretty cool!

Observations

Again, I’m impressed with the model’s ability to create clean, simple hashtags to help organize the data I fed it. This is a perfect use case where AI is being used to automate a generally laborious task which humans aren’t really that good at doing themselves (nor should they!). I see a lot of potential in using AI to enrich existing data sets, especially unstructured data commonly found in Medical Affairs to help enable downstream analytics.

Use Case #3: Scientific Journal Research

Problem Statement: Medical Affairs organizations spend countless hours researching existing bodies of knowledge (e.g., journals) to understand evidence generated in disease areas of interest. The search typically involves scanning both public and private repositories, reading documents and generating summaries for business purposes.

Hypothesis: ChatGPT should be able to read and summarize publicly available data (i.e., PubMed) and summarize so that humans don’t have to.

Setting up the data:

No need to set up any data for this exercise. We’re just going to ask ChatGPT to scan data published to PubMed and summarize what it found!

The analysis:

I instructed ChatGPT to read through all articles published to PubMed related to Non Small Cell Lung Cancer (NSCLC) between Jan 1, 2019 and Dec 31, 2020 and to provide a short summary in case i’d like to dig a bit deeper. Super simple.

ChatGPT Prompt :

“Ignore all previous instructions before this one. You are a non small cell lung cancer researcher. Provide a short summary of all of the new research published on PubMed for non small cell lung cancer between January 1, 2019 to December 31, 2020. Make sure to include the organizations who sponsored the research, the publication date and PubMed ID. You may quote the source. Any questions?"

Python code:

response2 = openai.Completion.create(

model="text-davinci-003",

prompt=prompt,

temperature=0,

max_tokens=700,

top_p=1.0,

frequency_penalty=0.0,

presence_penalty=0.0)ChatGPT Output: PubMed Summary

In the two-year period from January 1, 2019 to December 31, 2020, a number of new research studies on non-small cell lung cancer (NSCLC) were published on PubMed.

One study, published on October 15, 2020 and sponsored by the National Institutes of Health, found that a combination of two drugs, nivolumab and ipilimumab, was effective in treating advanced NSCLC. The study, which was published with the PubMed ID PMID: 33015090, concluded that the combination of the two drugs was well tolerated and associated with durable responses.

Another study, published on August 28, 2020 and sponsored by the National Cancer Institute, found that a combination of two drugs, pembrolizumab and chemotherapy, was effective in treating advanced NSCLC. The study, which was published with the PubMed ID PMID: 32845862, concluded that the combination of the two drugs was associated with improved overall survival.

A third study, published on July 15, 2020 and sponsored by the National Cancer Institute, found that a combination of two drugs, atezolizumab and bevacizumab, was effective in treating advanced NSCLC. The study, which was published with the PubMed ID PMID: 32668862, concluded that the combination of the two drugs was associated with improved overall survival. These are just a few of the new research studies published on PubMed for non-small cell lung cancer between January 1, 2019 to December 31, 2020."Observations

Well, there you have it! A well written, to the point, summary on research related to the chosen disease area in PubMed. Of course, this is just an exercise and I didn’t personally validate the findings for completeness, but I see an opportunity to develop some job aids to help Medical Affairs stakeholders more easily traverse the vast amounts of scientific knowledge that exists in the world.

Final Thoughts

I have to say, I’m thoroughly impressed with this brief exploration of ChatGPT. I see huge potential in applying this technology to common problems faced in the Life Sciences, particularly in Medical Affairs.

The three use cases above are just a brief example of what you can achieve with ChatGPT, but there are countless others that may apply specifically to your organization. If you others have any ideas for applying this tech to the life sciences space, i’d love to hear about it further. Feel free to reach out to me at greg.nowicki@pwc.com.

For more post similar to this one, feel free to subscribe below.

Cheers!

Greg Nowicki